Drones used to collect information for drought management, disease protection and pesticide application

Posted by

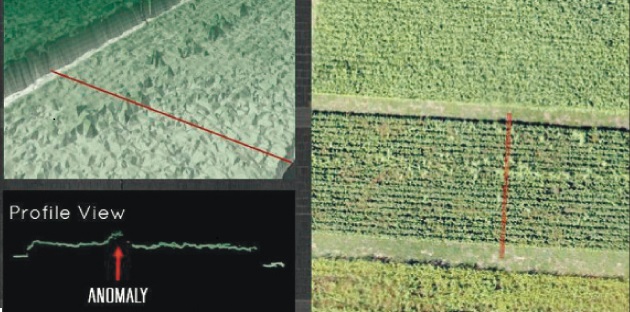

Plant heights, as an anomaly, are just one of the things that a camera can capture. | Vivian Faria image

The buzz about using unmanned aerial vehicles, also known as drones, for precision agriculture is getting louder among producers looking to improve their bottom line.

The technology continues to evolve, improve and become more specific for the job at hand. Drones are now considered less gadget and more specialized tool.

The ultimate goal of the flying computers is to gather field data and provide more insight about what’s causing variability. Producers can then use the information for better decision-making.

Vivian Faria, a remote sensing and geographic information systems specialist for Agri-Trend’s Sky Scout Program, said UAVs are beginning to replace satellite imagery because of their ability to fly below cloud cover. This has resulted in the development of new algorithms to monitor nutrient management, pest control, plant diseases, irrigation and yield prediction.

“Growers are already using information coming from drones for drought management, disease protection, irrigation and pesticide application,” Faria told Agri-Trend’s recent Farm Forum Event in Saskatoon.

“UAVs provide producers with information about each area of the field, so they can water or apply inputs only where needed — saving money and reducing the farm’s environmental footprint.”

She said drones can provide three types of detailed views:

- Aerial images show patterns in the field caused by irrigation problems, nutrient deficiencies or pest infestations.

- Visual spectrum and multispectral images using infrared can differentiate between healthy and distressed plants.

- Crop surveys can be done using time lapse technology weekly, daily or hourly that highlight problem areas and opportunities for better crop management.

Faria said having the right sensor (camera) is the key to collecting good field data.

For agriculture, options range from 400 to 2,500 nanometres (one billionth of a metre).

Green plant leaves typically display a low reflectance of 400 to 700 nm because of the absorption of radiance by photosynthetic pigments.

Vegetation’s green colour is the result of a higher response that occurs in the 550 nm range, while near-infrared levels of 700 to 1,300 nm have a high response because of leaf cell structure.

Leaf chlorophyll content falls as plants age or undergo environmental stresses. As a result, the green reflectance peak on the green channel will cause the tissue to show yellow.

Reflectance of near infrared decreases because the leaf structure has changed.

Shortwave infrared of 1,300 to 2,500 nm relates to the leaves’ water content, as well as dry carbon compounds when the leaves wilt and dry. Leaves’ reflectance generally increases as their water content decreases. The shortwave infrared band can be used to detect plant drought stress.

Available sensors include visual, multispectral, hyperspectral, thermal, radar/SAR, radiometer and lidar.

Faria said sensor types that are in development include pollen, chemical sampling, water sampling, meteorological sensor package and water source detection/geological features.

Visual sensors refer to the traditional RGB digital camera systems capable of high resolution, low distortion, still or video images and variable focal lengths. They can be used for aerial mapping, plant counting, land surveying and surveillance.

Multispectral sensors are used widely for assessing plant health and water quality, estimating nitrogen, vegetation index calculation, plant counting, weed detection and crop stress detection.

They range from three to 12 channels of blue, green and near infrared, with some allowing for vegetation index calculation. More channels help farmers and agronomists generate specific analysis.

Faria said sensors have not reached the point of detecting diseases.

“Diseases are a challenge and people sometimes expect too much, but with multispectral you can identify the severity levels. That helps you optimize your chemicals,” she said.

“The point is you won’t be able to see which disease is happening unless you go in the field and ground view it.”

Hyperspectral cameras share similar abilities with multispectral but can also perform full spectral sensing, mineral and surface composition surveys. They use narrow spectral bands over continuous spectral range and give a detailed spectral signature of the target.

They also help study the vegetation spectral signature in more detail and provide more discrimination between crops.

Thermal infrared radiation sensors are best used for surface soil moisture content, heat signature detection, livestock detection, water temperature detection and surveillance.

Faria said they’re being developed for use in crop management by monitoring crop temperatures and crop stress detection.

She said water stress shows up in crops when the evaporative demand exceeds the availability of water in the soil. The crop canopy temperature rises as transpiration decreases in the plant because of a lack of evaporative cooling.

Radar/SAR are used in applications for topography and soil moisture at different depths, depending on the frequency. SAR provides increased ground resolution, but information will vary depending on polarization.

A radiometer collects soil brightness temperature and is used for estimating soil moisture.

Lidar sensors penetrate through foliage to the ground and measure plant height. They’re used for topography and estimating crop biomass and flood mapping. They can also be used for drainage, watercourse classification and forestry studies.

She said software is available and in development that helps with pre-processing and analysis.

“You must correct the noise error that happens during the flight time and causes distortion in the image (pitch, roll and yaw axes influence, vignetting, lens distortion, etc),” she said.

“These can change the spectral, geometric and radiometric information of the image.”

Information then needs to be processed and analyzed: pixel classification, spectrum signature algorithms, evaluations of the best vegetation index to be applied and regression analysis.

“Depending on flight time, sensor and methodology, you can quantify areas that weren’t germinated, discriminate between weeds and crops or identify areas that were affected by diseases or insects and see the impact,” she said.

“You can also cross-reference the results with field data and use the information for decision-making, which is the ultimate goal.”

Source: http://www.producer.com/2014/12/tech-savvy-growers-already-replacing-satellite-imagery/